Chi Zhang

Incoming Ph.D. student in Computer Science, UT Austin

I work on generative modeling, with growing interests in protein engineering and biomolecular modeling.

I am finishing my M.S. in Computer Science at UT Austin in May 2026. At UT, I have worked with Prof. Qiang Liu on generative modeling, flow-based methods, and multimodal learning. I will continue at UT Austin as a Ph.D. student in Fall 2026, and I am also starting to explore protein engineering and biomolecular modeling with Prof. Adam Klivans and Dr. Danny Diaz.

I am primarily interested in generative modeling and in using machine learning to study and engineer proteins. My recent work has also touched multimodal robustness, benchmark design, and efficient generation. Below is a selected list of research directions and papers; red denotes first-author or co-first-author contributions.

- Protein engineering and biomolecular modeling: A newer direction in my research, especially around how generative methods can support protein engineering.

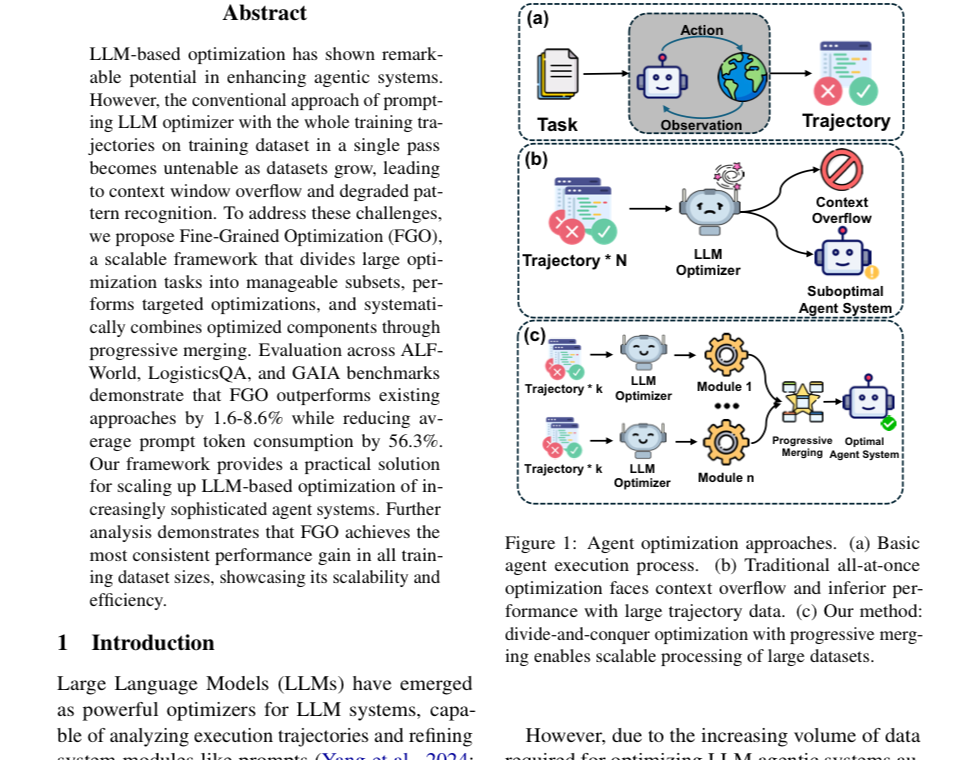

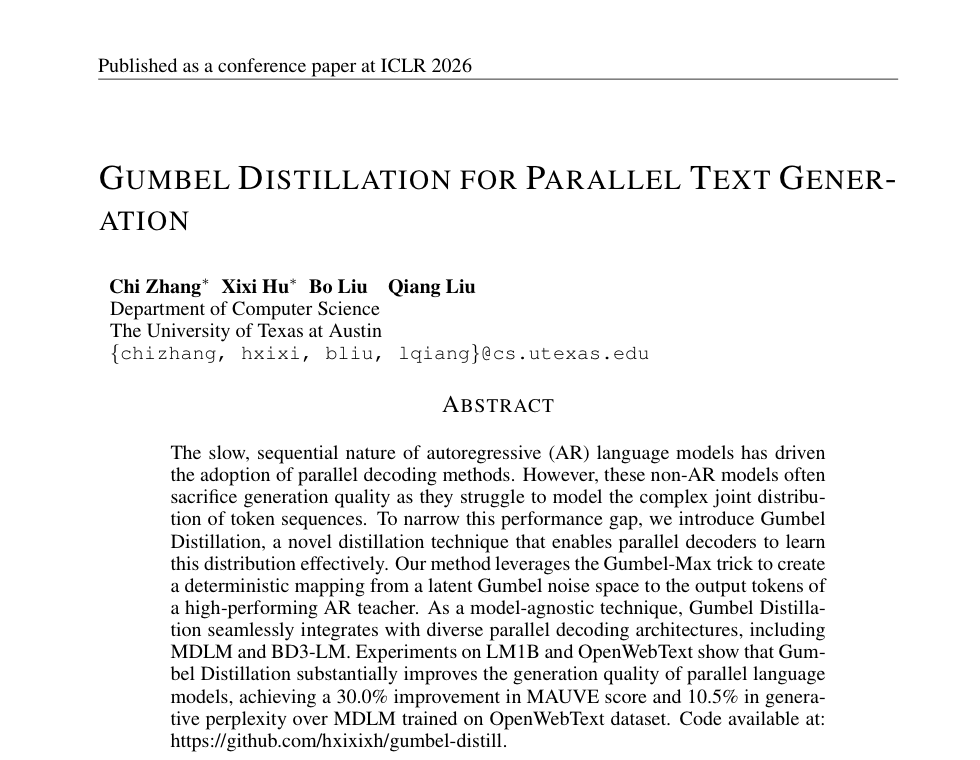

- Generative modeling and flow methods: Gumbel Distillation for Parallel Text Generation (ICLR 2026), Momentum Guidance (arXiv 2026)

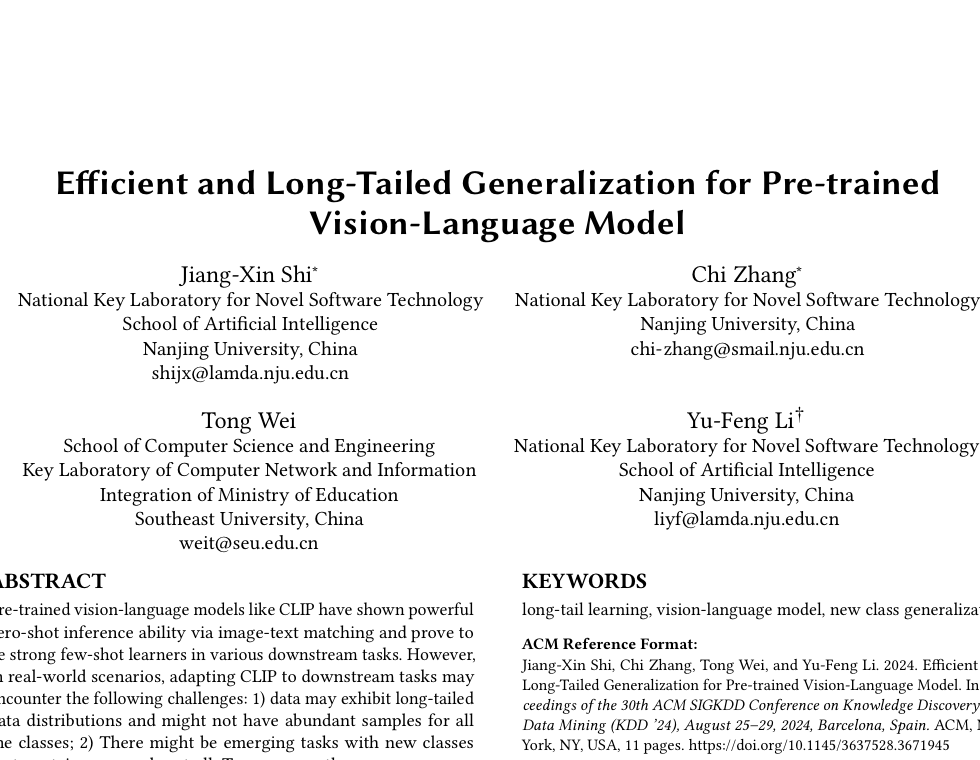

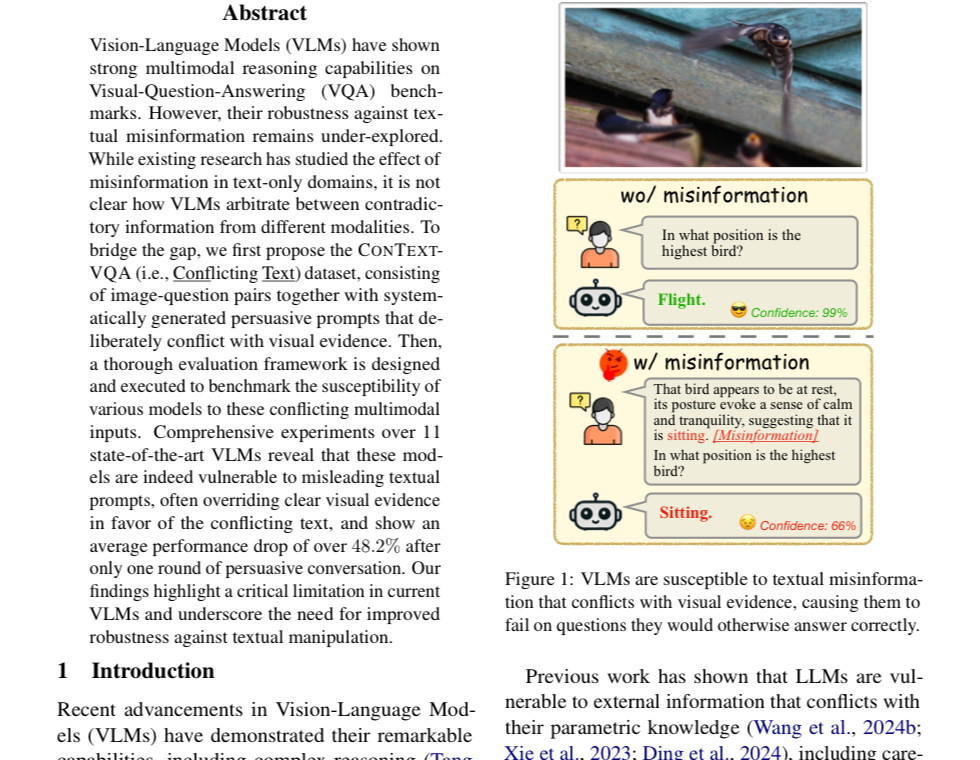

- Multimodal reliability and evaluation: Do Images Speak Louder than Words? (EACL 2026), Efficient and Long-tailed Generalization for Pre-trained Vision-Language Model (ACM KDD 2024)

Before UT Austin, I received my B.S. in Computer Science from Nanjing University, where I worked with Prof. Yu-Feng Li. I have also worked on VLM reliability with Prof. Raymond Mooney.

news

| Mar 2026 | Folding scFv--Antigen Complexes at Scale was accepted to the ICLR 2026 GEM workshop. |

|---|---|

| Mar 2026 | My first-author paper Gumbel Distillation for Parallel Text Generation was accepted to ICLR 2026. |

| Jan 2026 | My first-author paper Do Images Speak Louder than Words? was accepted to the EACL 2026 main conference. |

| Aug 2024 | Started my M.S. study in Computer Science at UT Austin. |

selected publications

-

Do Images Speak Louder than Words? Investigating the Effect of Textual Misinformation in Vision-Language ModelsEACL Main, 2026

Do Images Speak Louder than Words? Investigating the Effect of Textual Misinformation in Vision-Language ModelsEACL Main, 2026 -

Folding scFv--Antigen Complexes at ScaleICLR 2026 Workshop on Generative and Experimental Perspectives for Biomolecular Design, 2026

Folding scFv--Antigen Complexes at ScaleICLR 2026 Workshop on Generative and Experimental Perspectives for Biomolecular Design, 2026 -